Day 90 as a PM: Iterating on your product after first launch

How do you iterate on your product after gathering insights from the first launch?

About us: Andrew (AM) and Chandrika (CM) met during their MBA program at MIT Sloan and connected over their passion for product, growth and paying forward the help they got transitioning into product management. AM currently works as a Product Manager at Moveworks and CM works as a Product Manager at DocuSign.

For this post, we have a guest editor, Ekow Essel (EE) who is a Product Manager at Facebook. Ekow currently focuses on Platform-based Ads products. Previously, he was involved in launching Facebook Dating in the US.

In our previous post, we shared how we prepare for a successful product launch, what the end to end process looks like and resources that you can customize to fit your needs while preparing for a product launch.

In this post we will be covering the post-launch process that we have adopted ourselves and/or learnt from other PMs around us. The process involves reflecting on and analyzing the 3Ps of product, process and people. While all three of those are critical, we are going to focus on the “product” aspect here.

Q1. How do you monitor the performance of your product/feature? 📈

AM: I usually have two types of metrics to measure and track - ML-based metrics (Precision, Coverage, Recall) and Customer Impact metrics (Tickets Accelerated, Resolved, etc). These metrics would have been established earlier in the process, starting in the Pilot phase. When a product or feature is launched, then these are actively tracked on both our internal and customer dashboards. If there is a poorly performing metric, I ask myself the following questions:

Is the data 100% accurate? Sometimes, data clean-up is necessary which happens in a fast growing company. I would compare notes from estimations and analyze the raw data, even rebuilding from the bottoms-up to identify any anomalies.

If the data is 100% accurate, then did we make correct assumptions for the target goal metric? During the pilot and early stages, before launch, this is usually battle-tested and modified, if needed. I do not see this change after launch.

Is this something that is specific to the customer and can be addressed quickly via self-service tools?

Is this a systemic problem that justifies a change to the feature itself and requires thoughtful engineering design and work?

Internally, we use the open-source Apache SuperSet, which is also used by tech companies such as AirBNB, for visualizing metrics. The Product Team can easily and quickly create visuals within SuperSet dashboards, which can be powered by custom queries.

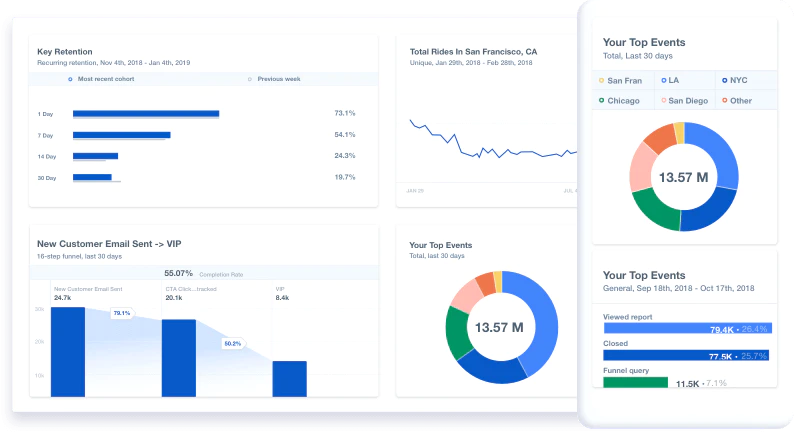

CM: After having defined the KPIs for my product before the launch, I typically create reports and dashboards for the metrics I want to measure.

There are a variety of metrics we measure in our dashboards, based on the type of product. Typically they can be categorized into:

Business Performance (metrics related to revenue, bookings, leads etc.),

Product Usage (user and feature related metrics like volume of users using feature X as a share of total logins etc.) and

Product Quality (support tickets, escalations etc.)

There could also be some other Cross-functional KPIs that we own jointly with another team (example: NPS jointly owned with Customer Success, Support etc.).

I use Mixpanel to create these reports and dashboards that are visible to all team members. Here’s what a sample dashboard could look like:

I would check these dashboards frequently - somewhere between daily at the beginning of the launch to a few times a week. In case of an A/B test, I would have an estimate of when the test would reach statistical significance before we conclude it.

EE: You need to be rigorous with your success/exit criteria at each stage of the product. I invest a good amount of time defining and aligning what success looks like not just for the project overall but for each critical milestone of the product or feature I’m working on. This execution focus is important because you never want to be in a position wherein you’re questioning whether execution was a contributing factor to failure. From a metrics perspective, the specific data we’re looking for is often going to depend on where we are in the product life cycle. For example, if you're still trying to find product-market fit, you may over index on performance metrics to make sure you’re driving the requisite amount of value to your end customer. As you move through the lifecycle, this performance remains important, but you may shift to prioritize growth while viewing performance as a tracking metric. As far as tracking goes, we have a host of proprietary tools to help us measure our impact.

Q2. How do you decide whether the product works as is or if it needs iteration? 🤔

AM: While measuring the performance of the product, I see whether the metrics are validating or disproving the hypothesis. When a metric is not performing to the uncompromising benchmarks set by our engineering and product team, we analyze the impact on the overall user experience. When making a decision to address it immediately or later, I consider the following:

Will it create such a negative experience for someone to not want to use it? If so, we will address it as we always want to create delightful experiences for our users.

Is there a short-term solution that can be put into place, which may not be scalable on our end, but create a positive experience for users? Creating tech debt is fine until we can have a more scalable solution down the road.

With finite engineering capacity, we often have to think critically about what’s best for the company, even if it means to put on hold any slight enhancements to the feature / product. Fortunately, our overall Product Objectives are clear and serve as a good guide to know when to address something immediately or later.

CM: If the product and business KPIs show a positive lift and if the product performance is stable, I would say the product works for its intended purpose. Typically I would have started on another project in the backlog already and would continue on that. In the meantime, I would continue gathering insights on the recently launched product to identify areas to optimize and spread out the enhancements over the next few sprints.

EE: If you see the product is not leading to the desired behavior, then you start the iteration process. Based on analysis, there’s generally an allowable threshold for metrics regression. A good metric is indicative of user behavior, and if the data is telling us that the change in behavior is exceeding that allowable threshold, then we do some additional user research to understand why. Our iteration plan depends on how far “off base” our metrics are. If it's at the far end of the spectrum then we do additional user research to confirm confidence in the hypothesis. If the regression isn’t that far off, we look at the flows again, infer what may be causing these regressions, and make small iterations. This is why your success/exit criteria is so important because they are the roadmap to your product iteration process.

Q3. Do you conduct any post launch user research? What does the research focus on? 💡

AM: I am fortunate to work with a great team of UX researchers who have developed a system to obtain customer feedback, ranging from CSAT surveys to review of suboptimal experiences, which are signaled from feedback mechanisms within the product. Often, I combine these insights with other sources of information to make a decision as to what needs to be addressed. It's remarkable to see that some specific flows may have significant drop-off because users were confused on verbage, either because the wording is poorly written (our copy mistakes) or outdated (specific instructions were incorrect from customer stakeholders).

CM: I believe research is our greatest defense against bad product decisions. Post launch research is a great tool when the insights that we have from data and customer feedback channels are ambiguous or just not enough. For post launch research, I would focus on users who have been exposed to the new feature/product and interviewing them to understand their experience with the new product. Part of the PM job as I see it is finding surprising insights about the way users are using the product, seeking disconfirming information and challenging previously held assumptions about the product and its use cases.

EE: I touched on this a bit in the earlier question. In cases where our hypothesis seems way off in terms of expected behavior vs. actual behavior, we go back to the drawing board and conduct more research The post launch research is a lot more focused compared to the broader research we would conduct at the beginning of the project. Since at this stage we have identified the areas that specifically differ from our original hypothesis, we focus on only those particular steps in the user flow.

Q4. What is the rollout journey like when you decide to ramp up the feature/product? How do you narrow down on the user groups included in each ramp? 🎛️

AM: We have a phased approach to rolling out a feature/product. In addition to certain customer milestones, we also want to record the customer impact proof-points along the way. A proof-point is something like “After one month of turning on the feature, we were able to improve performance by 50%”. In our world of enterprise SaaS, these proof-points are often used by Customer Success (CS) to justify the new product/feature to customers to help expand its footprint. The proof-point also instills confidence to CS that it's indeed a feature worth advocating.

CM: This varies quite a bit from project to project. The general idea is to make sure your product is stable and bug-free before a GA (general audience) release. On my projects, I have done the user segments for a rollout based on country, language, various plans that users are on, depending on the type of feature or product we are launching. The ramp can start from 5%-25% of the audience with 50%, 75% milestones before we do a GA rollout to 100%.

EE: This absolutely varies by team/product/project, but in my current world, we broadly adhere to 3 launch milestones for our products:

Get the product out to a small number of users/ customers to ensure nothing is broken (sub 10% of a particular customer segment)

Ramp up to a larger subset (~50%) and monitor metrics to see how the product is impacting user behavior and change

Once you have high confidence that you’re solving the people problem and the experiment appears to be working, roll out to 100%

There can be additional nuance to this based on different factors including existing experiments, audience focus, or anticipated product impact; however, this is the general framework.

If you found this valuable and want to support us, consider buying us a coffee!

Bonus Tip 🏆:

“Product portfolio for a PM is like a diverse investment portfolio, you have your ETFs - the stable projects that you know you will be able to ship. Then you have your big bets which are high risk, high reward. For any PM to be set up for success, it’s important to have a good mix of both”

- Ekow